Data issues are discovered too late: missing records, schema drift, broken aggregations or silent transformations that fail audits.

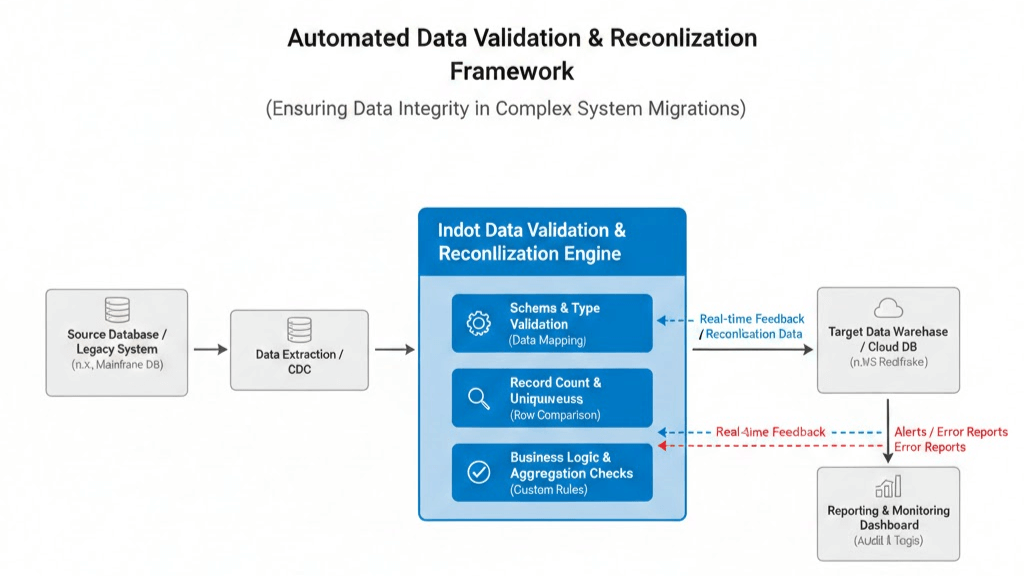

A validation engine that continuously compares source vs target and produces traceable reconciliation, alerts and reports.

Validate mappings, datatypes, nullable rules and required fields across systems.

Compare row counts and detect duplicates after transformations.

Validate KPIs, totals and domain-specific logic.

Reconciliation logs, error reports and dashboards for governance and audits.

Architecture

Source systems feed ETL/CDC pipelines. Validation runs in parallel and produces reconciliation feedback, alerts and audit trails.

Need data integrity you can prove?

We tailor validation rules and reconciliation strategy to your data model and compliance needs.